Optimal Experimental Design

Optimal Experimental Design encompasses the concepts of proposing and analyzing various data samples with the goal of providing the most useful information with the least amount of expense.

To accomplish this, a focus is placed on finding data samples which cannot be improved without a tradeoff to at least one other performance criteria, these points are considered Pareto-optimal.

These Pareto-optimal samples are found by applying global optimization methodolgies which try to minimize across a given dataset using various mathematical methods.

Multi-Objective Optimization

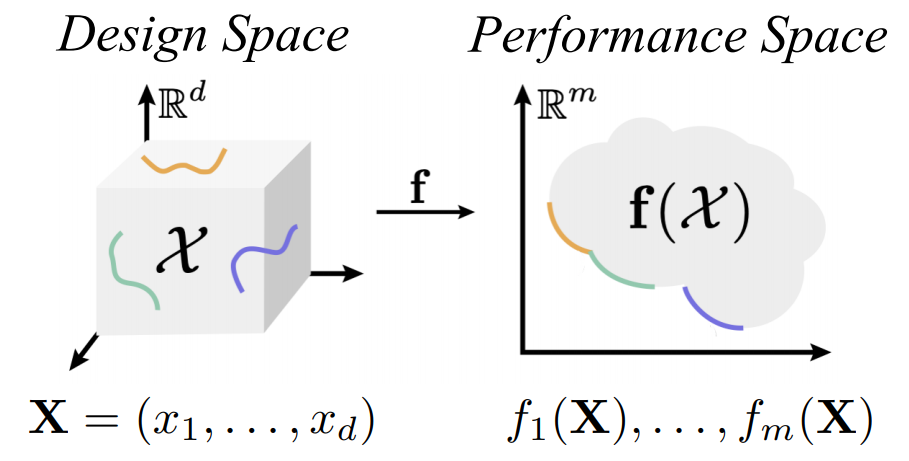

Given a design optimization problem with d design parameters and m different conflicting objectives:

our goal is to find the optimal set of design samples

that minimizes the objectives with different trade-offs, called Pareto set. The set of optimal objective

values is called Pareto front.

To this end, many population-based

multi-objective evolutionary algorithms (MOEA) are proposed, e.g., NSGA-II and MOEA/D

, which are widely used in various multi-objective problems, including financial portfolio design

, manufacturing design , and optimal control . However, MOEA algorithms typically

require a substantial number of evaluations due to the nature of evolutionary computation. This

requirement prevents them from being applied to many real-world problems, where the evaluation can

be computationally expensive and becomes the main bottleneck of the whole optimization process.

Multi-Objective Bayesian Optimization

Bayesian Optimization (BO) is originated from , and was popularized by the Efficient

Global Optimization (EGO) algorithm . BO achieves a minimal number of function evaluations

by utilizing the surrogate model and sampling guided by carefully designed selection criteria.

Taking the best of both worlds from multi-objective optimization (MOO) and BO,

multi-objective Bayesian optimization (MOBO) is designed to solve multi-objective problems

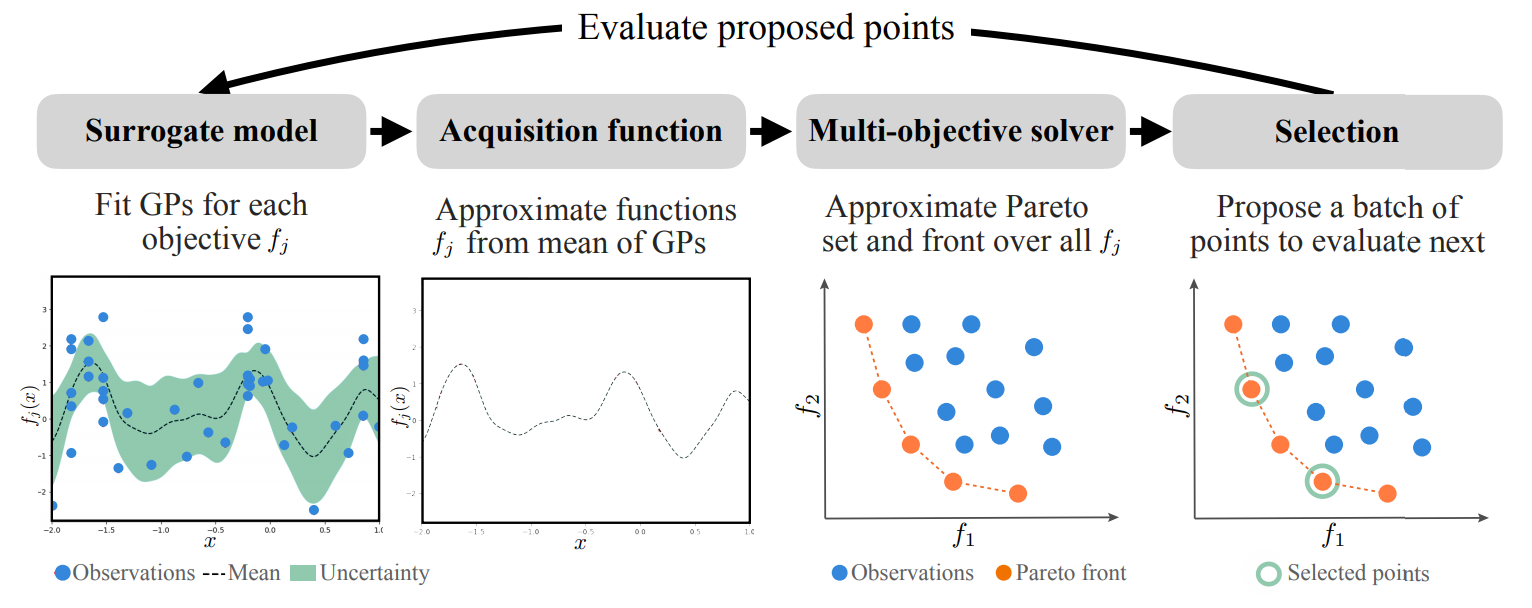

that are expensive to evaluate, e.g. DGEMO . This is achieved by the following iterative process:

Repeat until a stopping criterion is met:

- Fit surrogate models for each objective based on the current dataset, which map from design input to performance output.

- Extract acquisition functions from the fitted surrogate models (e.g. mean functions) which serve as

evaluation functions purely based on the surrogate models.

- Run a multi-objective solver (e.g. MOEA) on the acquisition functions to approximate the

Pareto set and front, which results in a set of candidate samples for selection.

- Select a single sample or a batch of samples to evaluate next with according to some criteria.

- Evaluate the selected samples on the real problem and add the evaluation results to the dataset.